I’ve not written a blog post for ages. Maybe sporadic posts are inevitable. Anyway, here’s one which has been sitting in draft form for a couple of years ago and I’ve just managed to drag it up-to-date.

tl;dr Compute and store high-resolution sampling of the Mandelbrot set, in a way which can be incrementally updated (e.g. to increase maximum iteration count) and is independent of any image which can then be generated from it.

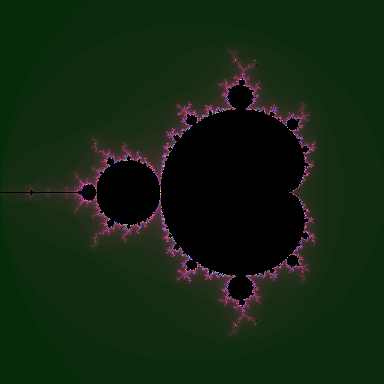

I’ve been somewhat fascinated by fractals for over two decades now (that makes me feel old :-) ), and the Mandelbrot set is both common and relatively easy to understand and program. I’m not going to go into details here - take a look at the Wikipedia page.

The usual thing with Mandelbrot plotters is to evaluate the Mandelbrot set over a given area of the complex plane and render the result as a colourful picture. Depending on the hardware, area selected, and precision, this can take from milliseconds (rough rendering on a GPU) to many hours. But however long it takes, the typical process is to re-evaluate it in real time, each time. I’ve done an example of that in JavaScript here. There are many others in all sorts of programming languages.

brotmap is a bit different - it’s thinking about the question “What if we pre-calculated and stored the Mandelbrot set, to a sensible degree of accuracy, such that we could render images from the pre-calculated version?”

An analogy could be a sampling synthesizer. The work required to produce a tone from a sampler is considerably less than from a complex synth. Back in-the-day (two decades ago) I would pre-generate tables of sines for graphical plasma effects and so-on, because a table lookup was much faster than a sin(x) calculation even on a top-of-the-range 486. Today that would be crazy; memory is the bottleneck today, and table lookups of just about any sort are to be regarded with suspicion.

But that is exactly the point and purpose of brotmap. Its grand but insane idea is this: let’s precalculate the Mandelbrot set. (Well, actually the point and purpose of brotmap is to have a play around and maybe try out some new (or not-so-new) things along the way, but that’s not very profound).

There are a couple of things which needed to be decided before we go off and do such a silly thing. What are the input parameters? What is the end result? Starting with the output format, a coloured image isn’t much use to anyone; we need a something lower level. What we really want is an iteration count at bailout; that is what the colours in funky fractal images are based on anyway. By storing the iteration count, we can apply any colour map we like at a later point, or turn the map into a 3D height map, or anything else which may or may not be interesting.

On the input side, we need to specify the area we are interested in, the resolution, and the maximum iteration count. A square area from –2..+1 on the real axis and –1.5..+1.5 on the imaginary works well as a outer boundary, and the resolution can be as high as we like. For performance and accuracy we want each point to be accurately representable by a floating point number, so brotmap uses a step size of 2-n for some n.

There is no point having high resolution if we don’t also have a high maximum iteration count. One key ‘feature’ of brotmap is that it allows incremental increases in iteration count. If a map is made with a MAX_ITER count of 1024, then the work generating that map can be reused by using it as a starting point in further iterations. To achieve this, not only is the iteration-count-at-bailout stored for each point, but also (for points which have so far not reached bailout), the current value of the complex number in the iterative calculation. To prevent precision loss, these are stored as a pair of double precision numbers (2x8 bytes per point). But if the point is definitely not in the M-set, then we no longer need that information - just the iteration count.

Anonymous unions to the rescue

These maps clearly get rather large. At a step size of just 2–10, there are 3*3 (the image area on the complex plane) * 210 (the number of points per unit in each row) * 210 (the number of rows per unit) = 9.5 million points. And each of these has to store a good few bits of data - at least two double precision floating point values for points which could still be found to be in the M-set, and the bailout iteration count for those that have been excluded from the set.

Since we only care about either the current iteration values of re and im, or the number of iterations at which we exceeded our bailout condition, we can use unions to store both sets of information in the same space. But we also need a way of determining which type of data each point contains. Fortunately, IEEE754 floating point comes to our rescue here, because there are some special bit patterns we can use as sentinels - they will never appear in the course of (our) floating point evaluations, but we can set them and detect them. Amongst these values are the NaNs. Not-a-Number values allow us to use one of the pair of double floats to indicate that the point is outside the M-set, and that the other value should be treated as an integer iteration count.

struct pinfo {

double x;

union {

double y;

long itercount;

};

};

One of the great things about C++ is support for anonymous unions. That union in the pinfo struct? No name. Anonymous, you might say. These types allow operations to all members of the union to be transparent - nothing in the code needs to know the structure even is a union.

To make the point clearer, the pinfo struct could have looked like this instead:

struct pinfo {

double x

double y;

long itercount;

};

and nothing else in the code would have to change, except that we would be using 50% more storage (assuming the size of a long is also 8 bytes, typically true on 64 bit machines).

OK, so we have a basic input spec, output spec, and the M-set calculation itself is straightforward. But we’ve still got to write out gigabytes or more of data for anything interesting. We don’t want messy IO code cluttering up the rest of the code, do we?

mmap to the rescue

mmap is awesome. It’s not the easiest API to setup and clean up, but neither is it difficult, and in-between these steps it gets out of your way. Like totally-invisible out of your way. I can imagine that using it with a 32 bit virtual address space would be a pain, as you’d have to continually re-map different sections of a large (multi-gigabyte) file into the limited address space, but with a 64 bit VAS, it feels like magic. That structure of millions of 16 byte points? Wave a wand, and it’s backed by a file. No read operations, write operations, anything else at the user level. No stdio buffering, flushing, seeking. Just the C(++) memory model, and the OS does the rest. It feels like cheating - and maybe it is to use it like this - but remember this is a crazy pointless program, right?

pthreads to the rescue

Mandelbrot calculation is a trivially parallelizable problem. And I have multiple cores in my machine (only two, but…), so it would be nice to get a speedup from them. Sadly I’m more than a little late to this party. The C++11 standard has got threading support, and I’ll use this as an opportunity to learn that later, but for now I’ve learnt a minimum of pthreads coding to get this working. It’s simple enough; use pthread_create to create each thread, and have a mutex lock around shared data.

Rendering the data

Of course, this wouldn’t be much fun without actually being able to have some visual representation of the output, so make_ppm is a separate program which reads the data files and outputs a PPM file rendering the M-set in basic greyscale. Colour maps can wait :-)

Note I’m just using PPM as a lowest-common-denominator file format. It’s trivial for this sort of thing, though it does produce large (uncompressed) files, taking 3 bytes per pixel.

pnmtopng will convert a PPM file to the more useful png. (pnmtopng is part of netbpm - available for most Linux distributions or as part of homebrew for Mac, though ppm2tiff seems to be pre-installed on Mac and will suffice).

Running it

The code for brotmap is available on bitbucket, or github if you prefer that.

The makefile includes a target which will build and display the output (subject to dependencies - tested on Linux & Mac OS X with netpbm installed):

make show

This will compile the two programs (brotmap and make_ppm), and then run things (ignoring directories etc) as follows:

./brotmap mandel.dat 10

./make_ppm mandel.dat out.ppm

pnmtopng out.ppm > image.png

open image.png

This computes a set of data for a 3072x3072 sampling of the Mandelbrot set, then renders a PPM file from it, converts to a more friendly format, and then (hopefully) displays it on-screen.

brotmap takes two arguments: the target filename, and a ‘binary digits’ value, dictating the resolution of the computed filename. Note the output filenames will be large:

| bit_size | res (x*y) | points | file size |

|---|---|---|---|

| 10 | 3072 | 9437184 | 144 MB |

| 11 | 6144 | 37748736 | 576 MB |

| 12 | 12288 | 150994944 | 2.3 GB |

| 13 | 24576 | 603979776 | 9.2 GB |

| 14 | 49152 | 2415919104 | 36.86 GB |

| 15 | 98304 | 9663676416 | 147.5 GB |

| 16 | 196608 | 38654705664 | 589.8 GB |

The default which various Make targets use is a binary size of 10. 12 is fairly quick, and I’ve tried 14 once or twice.

make_ppm takes two arguments; the input file generated by brotmap, and the output file which will be in PPM format (subformat P6).

See an example png (a 12288x12288 resolution greyscale image here - though note it may stress your browser slightly. This is computed to an iteration count of 4096, with binary digits of 12. Note that the 2.3 GB source data for this result in a PNG file of only 4 MB…

A smaller example (binary digits of 10) is here.

What’s next?

Better command line parsing (e.g. for iteration count, step size…) - there’s some in there, but it’s very crude.

Incremental spatial updates - incremental updates based on iteration count are nice, but what’s really needed are incremental resolution increases. It should be possible to increase resolution by a factor of two in each direction by keeping the current set of data as one of the four points being evaluated for each of the original points, so doubling the number of points takes the same amount of time as the previous round (assuming that data is available). It might make sense to completely change the structure of the data in memory / on-disk to support this operation.

C++11 based concurrency - it won’t get much new, though I’ll get round to automatically working out the appropriate number of threads to use.

Use of mmap-based IO in

make_ppmas well asbrotmap. Again, won’t get anything new, but will clean things up.Improvements to make_ppm - it should be possible to pull out a small section of the data and only render a selected area. Selectable colourmaps (something other than grayscale) would be nice too.

Distributed parallelism - this is a major step up in terms of complexity, but definitely doable. I like to keep things low-level and primitive (and yet still portable - that’s what POSIX is all about), so I’ll probably do something socket based first, or maybe zeromq…

Improved performance per core - the M-set calculation per point is very basic, with a single optimisation that it knows that points within the major circle and cardioid are within the M-set. Further optimisations could be to use SIMD parallelism (SSE3).

Smooth colouring; most mandelbrot plotters don’t just use a simple iteration count - colour mapping, but compute some ‘distance’ factor from which to derive the colour.

No comments:

Post a Comment