I had an awesome time at PyConUK last weekend. I went to my first code dojo where I helped write a text-based adventure game (with a disturbing plot!), played with using Python on a RaspberryPi to access the GPIO, started a new Python module for my own use, and gave my second ever lightning talk, titled ‘Typing to Yourself’. This is 'the blog of the talk'.

What's this about then?

I’d started finding that IM chat logs often gave a lot of information, and often the timestamps were useful. The conversational nature of the chats also often gave subtle and useful clues about things such as confidence levels which a more formal report would lose. I started to think that it would be worth having that even if I wasn’t chatting to someone else. And so the madness started….

Typing to yourself. About stuff. Preferably as it happens, in ‘real time’ (is there another kind?). I suppose some people use Twitter like this, but I (and I'm sure my employer) like it that I keep at least some things to myself.

I've been doing this for a few months now, and got a single file with about 1300 lines of info I've been writing. Originally I cleared it out every few days, but then thought that maybe keeping it all around would be of some benefit.

Why Type to Yourself?

Record snippets of new knowledge

There are hundreds of small things I’ll find out about and then not look at again for 6 months. And chances are, I’ll forget all about them. It’s worth recording that sort of stuff. Things like pv, a new and useful iptables rule, the name of a nice vim colour scheme.

Decouple recording from reporting

Part of a knowledge-based job, where part of the task involves continual learning and researching, is that there is always the risk of going off into some blind alleys, dead-ends, or things more interesting than what you / I should really be working on. Chances are, even if it’s tangential to the work you / I should be doing, it’s still useful in itself, and worth recording. If I’ve just spent half an hour reading about ZeroMQ, I’ll include that. I might not record it in a list of training activities for the week though. It defers disclosure, allowing selection to take place at a later point. And therefore encourages more interesting and accurate reporting. By separating out recording from (for example) time reporting systems, we can post-process and filter that raw data later. Same thing as RAW and JPEG files from a camera; it’s not a bad thing to have the RAW data even if the end result is somewhat different. We are likely to be more honest if we type to ourselves, including feelings, distractions, etc, some of which will be useful at a later point.

Record why decisions were made

We make dozens of design decisions every day, and the vast majority of these seem obvious at the time. But there are some that aren’t ever obvious, and some that won’t be tomorrow even if they are now. Recording why we make the choices we do is important, even if just to force us to make them consciously. And it can be very useful to document dead-end design decisions which we try and ultimately give up on, in the hope of avoiding repeating them in the future.

Overcome creative blocks

Writer’s block affects programmers as well as novelists. Or at least it affects me from time to time. Sometimes I sit there for minutes on end, simply staring at the screen. I’ve found that explaining my dilemma to myself through the medium of typing to myself can often overcome this. Sometimes any activity can be a key to being able to think clearly about a problem again. Not only that, but regularly writing down what you're doing can be a great antidote to distraction and procrastination. This comes back to being able to be honest with ourselves about what we're doing - writing this down makes us think about it, be able to criticise it, and therefore more quickly be able to change direction.

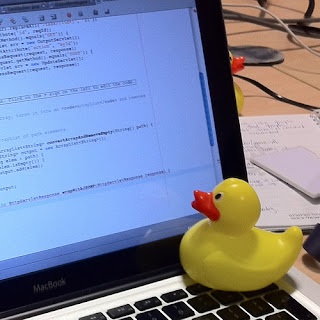

Rubber duck debugging

This is a technique which uses vocalisation of a problem we’re facing to make us think more clearly about the problem; to take a step back, and explain to a toy rubber duck - ideally one with no previous knowledge of the problem we’re facing - what’s going on and how we’re trying to fix it. Just explaining it often helps us realise the problem. But rubber ducks are tricky to find at the crucial moment, and people think programmers are mad enough already without seeing us all talking to little ducks sitting on our desks. No, typing to ourselves, writing down the problem, is clearly much safer. After all, a programmer writing down a problem to themselves looks highly productive, rather than slightly mad.

Searchable history

We version control our code. Why not version our thoughts and activities? Write stuff down. Be able to go back in time and revisit those thoughts at a later date. Use it to store our short-term thoughts just before a meeting or break, so picking up where we left of is easy. That sort of stuff. Or to record surprising errors which we can’t reproduce and just put down to ‘something must have been set up wrong’. But then we start to find that we’ve already recorded it two months earlier…

How should I go about that?

Timestamped

Typing to yourself is an activity best done in real-time. Doing it later may still have some benefit, but the stream of consciousness brain-dump in the background has a lot of value which is lost if we’re just typing a historical report on what happened earlier. The point of typing to yourself is that having a record is useful; trying to remember stuff to record after the fact is lossy and a waste of time. Having things timestamped is a motivation (‘I’ve not written anything for 2 hours!’) and useful for searching history - finding out just when that bug appeared last.

Centralised

For a given context (e.g. work), there should be a single log on which you type to yourself. Perhaps there shouldn’t even be multiple contexts; everything should go in one big fat log. But it should be a single log, and yet available everywhere. Having to merge logs, or wondering where the latest version is, or knowing but not having access to it - all bad things. Dropbox is good.

Low friction

The whole point of ‘typing to yourself’ is that it shouldn’t be a context switch. I tend to keep a tmux pane open with editfile running (as track -t). Switching into it is just a case of Ctrl-A/cursor key. Then type stuff. Then Ctrl-A/cursor the other way. There’s no alt-tabbing, no windows changing focus or popping in front of each other. And importantly, I can see what’s there at all times, so it’s always in my consciousness - I don’t have to ‘swap it back in’ when I switch to it. Another aspect of low-friction is that the data itself should be widely available to programs to use, whether for searching, editing, or anything else. A text file is ideal.

An Implementation

I’m more keen about the ideas here than the implementation, but without an implementation it couldn’t work. I use my editfile program for almost all longer pieces of writing - blog posts, ideas, plans. And my ‘typing to myself’ log, which is just an editfile ‘instance’ used in ‘time track’ mode, which keeps a single file on Dropbox with all the content in a text file. I wrote about editfile in an earlier blog post.

editfile started out as a very simple bash script:

#!/bin/bash

$EDITOR "~/Dropbox/editfile/$(basename $0)"

but is now a more complex bash script, including search, a two level hierarchy (I had that before iCloud decided it was a good idea!), command-line completion, and the time track mode I use for typing to myself.

The time-track mode has a couple of useful features - readline & history integration, and prompting and storing a timestamp. It’s not perfect; one of the key things is that the timestamp prompt doesn’t update in real time (although it does store the current time in the text file rather than the potentially out-of-date displayed time). The implementation of the time-track loop is the following:

now=$(date '+%Y/%m/%d %H:%M')

# read history from previous

history -r $HIST_FILE

while read -ep "$now >> " track_input ; do

now=$(date '+%Y/%m/%d %H:%M')

if [[ -z $track_input ]] ; then

# don't store blank lines

continue

fi

# use -- to indicate end to options e.g. if track_input

# starts with '->' which previously caused errors

history -s -- "$track_input"

echo "$now $track_input" >> ${TARGET_PATH}

done

# append current session to history

history -a $HIST_FILE

# ensure bash prompt starts on a new line

echo

I use this every day at work, and it's got to the stage where I want to use it more. I've got plenty of ideas for things to integrate into my implementation, though the real essence of it doesn't need anything clever really.